We Need A Cybersecurity Awareness Campaign And Civil Defense Force

Our nation is in a latent war in the information sphere. Fake News, troll farms, and bots — encouraged by nation...

Our nation is in a latent war in the information sphere. Fake News, troll farms, and bots — encouraged by nation states like Russia — are undermining Western political and social edifices. This real and pervasive attack is weakening both state and international security. At the same time, the American public has not been successfully mobilized to support a war effort since World War II. Many Americans want to “do something” about the current state of affairs.

We are proposing a revitalization of successful WWII efforts in two forms, in order to regain security in the information sphere. The first is a public service announcement (PSA) series with bold iconographic images and simple phrases to educate the public about the constant threat of disinformation being waged against the American public. The second is a Cyber Disinformation Civil Defense Force composed of civilian volunteers and run by social media platforms in cooperation the DoD and DHS, and research institutions studying disinformation.

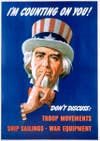

America’s resounding success in propaganda during World War II is evidenced by how slogans like “Loose Lips Sink Ships” and icons like Rosie the Riveter are still recognized and reproduced today. “Don’t feed the trolls” is a common response to suspected provocateurs online that needs to be amplified and expanded on. Impactful public service announcements are proven to be an extremely cost effective means to counter things like disease spread and to modify collective behaviors. If we compare disinformation to information or disease spread, it would follow that PSAs would be a cost-effective defensive measure. PSA topics like evaluating sources, critical thinking, and how online social networks work will bolster the resistance of citizens to being infected with foreign, hostile information narratives. We need to step up our counter-propaganda game, and catch people’s attention with visually arresting, easily absorbed PSAs that appeal to national unity against the psychological warfare being perpetrated.

Those iconographic posters from World War II appealed to the public to contribute to the war effort in tangible ways: victory gardens, scrap drive, rationing, buying bonds. We believe there is an enduring desire by average Americans to contribute tangibly, and that there is a medium for such contribution in information warfare by standing up a Cyber Disinformation Civil Defense Force.

Volunteers would take a 1- or 2-hour training on disinformation campaigns, and how to spot the various weapons of disinformation. After completing training they would be able to flag content for review by social media platforms. This approach utilizes the same “Wisdom of the Crowds” model that has been very successful in forecasting and prediction and more recently is being employed in Newark to monitor public cameras for crime. An important advantage of this approach is that humans can still hunt malicious actors and appreciate nuance more effectively than machine learning, and that broadening the data set used by machines based on large scale human efforts will dramatically improve machine learning countermeasures to information warfare. Incentives to maximize participation would also be a worthwhile investment. Similar to the Kaggle model, one individual and team each month with the highest accuracy combined with largest volume of correctly flagged content would win a financial reward. Similar to studies of superforecasters, they would also be interviewed to understand what strategies have made them so successful, which can then be incorporated into future training and PSAs.

A Wisdom of the Crowds model can also be used to weed out those who are not participating in good faith. Those who fall below a certain level of accuracy would be disenrolled, and only be able to re-enroll after a waiting period and repeating the training. Repeatedly being disenrolled, or flagging content generally recognized as ideologically disputable but not “fake” would result in permanent disenrollment. This works as a failsafe for volunteers abusing their flagging authority to harass, intimidate, or retaliate, and from infiltration by hostile organizations. Flagged content would be presented in an identifiable way (i.e. red typeface) until the platform investigates and takes action or removes the flag. Users can still access the content, but hovering over the red typeface would bring up an explanation of the program, and key points about disinformation. The color would be a quick visual indication to users that content is suspect, and to avoid sharing or engaging with that account. Some current examples of targets for flagging would include any recently created account with few friends that is prolifically sharing or commenting on controversial content, accounts re-tweeting or sharing dozens of times an hour, “news” which recycles pictures from previous news stories and presents them as contemporary, etc.

Data on all flags, and their disposition would be collected and analyzed over time by the DoD and research institutions. This would enable our professional forces to distill best practices, crowdsource potential targets for further investigation, and provide a dataset to build better predictive models to screen for malicious actors. A populace better educated about the threats will be less susceptible to disinformation, and empowering volunteers to aid in the fight is a win-win.

Harnessing the Wisdom of the Crowd to sift the enormous amount of online content subject to disinformation provides several distinct advantages. First, it would be a huge boon for information operations, while providing civilians an opportunity to make a meaningful contribution. Second, it would be prohibitively costly to hire personnel to sift so much data, and as government agents they would raise concerns of politically motivated censorship. A large group of individual citizen volunteers would remain robust to censorship concerns. Third, it thwarts foreign PsyOPs efforts by mobilizing their targets against them.

Ultimately, this program would give average American citizens an opportunity to “do something”: to help ensure our divisions are not amplified by foreign psychological operations on our populace. It could be an invaluable home-grown contribution to the cyber war effort.

Jennifer Cruickshank is currently an Army spouse with a B.A. from Northwestern University and a M.Th. from the University of Edinburgh. Captain Iain J. Cruickshank is a USMA Graduate, class of 2010, and is currently a PhD Candidate in Societal Computing at Carnegie Mellon University as a National Science Foundation Graduate Research Fellow. The views expressed in this article do not officially represent the views of the U.S. Army, the U.S. military or the United States Government, and are the views of the authors only.