The Army is developing a system to allow autonomous ground robots to communicate with soldiers through natural conversations — and, in time, learn to respond to soldier instructions no matter how informal or potentially crass they may be.

Researchers from the U.S. Army Combat Capabilities Development Command’s Army Research Laboratory, working in collaboration with the University of Southern California’s Institute for Creative Technologies, have developed a new capability that allows conversational dialogue between soldiers and autonomous systems.

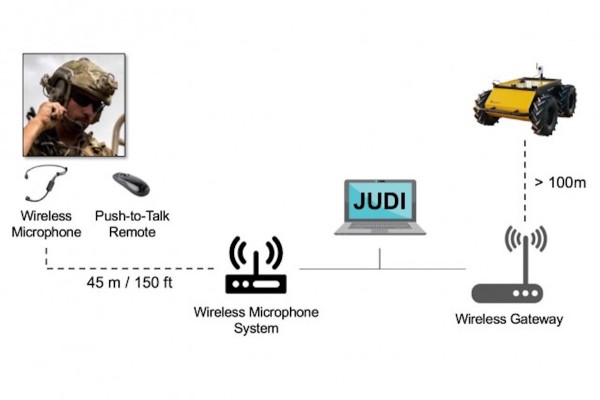

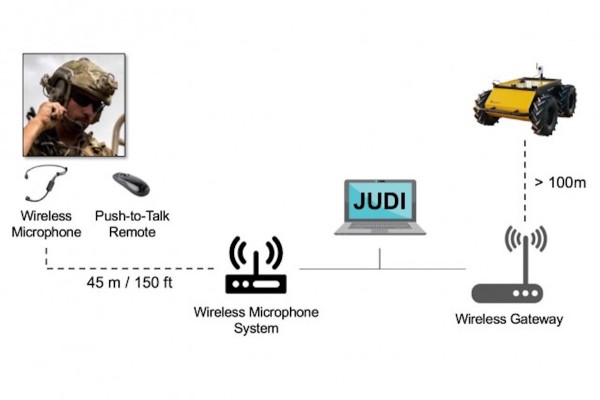

The capability, known as the Joint Understanding and Dialogue Interface (JUDI), is elegant in its simplicity: the system processes spoken language instructions from soldiers, derives the core intent, and carries out a set of functions, according to Dr. Matthew Marge, a computer scientist at ARL.

JUDI also allows Army robots to talk back to soldiers by asking for clarification, providing a status update, or executing a task, creating “bi-directional conversational interactions” — yes, a literal dialogue — between soldiers and their bionic wingmen.

More importantly, however, JUDI can learn on the fly, adapting to both the changing physical environment during a mission and deciphering the true intent of a soldier’s instructions regardless of what words they actually say, according to Marge.

“Basic instructions are things like ‘explore the room ahead of you’ or ‘scout route Bravo,’ and we can have the robot actually execute that,” Marge told Task & Purpose. “But JUDI is meant to interpret intent from spoken language without relying on specific phrasing, so a soldier can interact with a system using natural language and aren’t constrained by the words they say.”

In short, JUDI-equipped robots can interpret natural soldier commands in the field, no matter how informally those commands are structured — “JUDI, get the fuck back here” comes to mind — and translate them into actual functions in the field.

“We want soldiers to feel less constrained, and we know soldiers won’t want to memorize a list of commands to interact with an AI agent, and JUDI reduces that burden.” Marge told Task & Purpose. “The algorithm listens to the verbal instruction and finds the highest word overlap to apply to a direction.”

While JUDI may sound akin to commercially-available voice-activated personal assistants like Siri and Alexa, there are major differences: namely, that JUDI can learn based on changing conditions on the ground.

“You can’t tell Alexa and Siri, ‘hey, what lock is on the door ahead,'” Marge told Task & Purpose. “We’re looking not just at what the soldier says, but how the robot interprets the immediate physical context of the world — where it is, whether it has a map, the representation of the environment, and so on.”

While the JUDI capability has mainly been applied to small ground robots for search and rescue and reconnaissance functions, the program is part of the Army’s Next Generation Combat Vehicle effort, a major modernization priority for the service that seeks to potentially replace both Bradley Infantry Fighting Vehicle and the M1 Abrams.

“We envision this most immediately functioning in collaborative exploration like scouting and searching, combining the dialogue ability of JUDI with robots that can go into hazardous environments,” Marge told Task & Purpose.

“It could be mounted or dismounted operations, but you could have JUDI interact with [unmanned ground vehicles] from a mounted position, or you could have soldiers on the ground interacting with robot teammates” he added.

JUDI has been in testing for the last several years and likely won’t see an application in a major testbed like, say, the Army’s nascent Optionally Manned Fighting Vehicle for some time. Indeed, ARL researchers plan to evaluate the “robustness” of JUDI with physical mobile robot platforms at an upcoming AIMM ERP-wide field test currently planned for September.

But in the meantime, according to ARL, JUDI will be integrated into the CCDC ARL Autonomy Stack, a suite of software algorithms, libraries and software components available to a plethora of unmanned efforts like, say, the Defense Advanced Research Projects Agency (DARPA) ‘Squad X’ experiment that in 2019 partnered dismounted Marines with unmanned robots and drones.

All of this means that the JUDI capability might show up in a semi-autonomous system near you sooner rather than later.

“We’re focused on ground robots, because that’s where things get messy, that’s where all the action is,” Marge told Task & Purpose. “We’re looking at robots that can move around in the world ahead of soldiers to keep them out of harm’s way.”